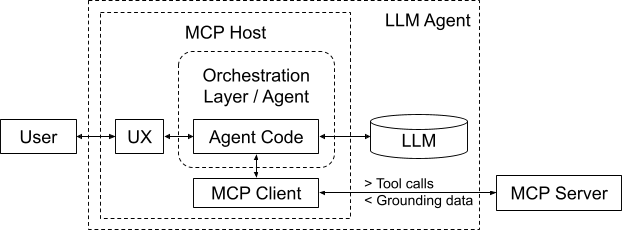

MCP-Enabled Agent Architecture

The Gracenote Video MCP Server is based on the open Model Context Protocol first proposed by Anthropic. MCP protocol creates a standardized way for Large Language Models (LLMs) to access external tools and data. Although the protocol was originally created by Anthropic, MCP Servers are now supported by all major LLM providers.

The Gracenote Video MCP system utilizes structured or tool-augmented generation. This architecture ensures that the final AI response is always grounded in accurate, real-time Gracenote metadata.

System Components and Terminology

The following is an overview of the LLM Agent system components, as well as the terms used in this document.

Familiarize yourself with these terms before starting your Agent implementation.

| Term | Definition |

|---|---|

| Context Window | The active, limited “working memory” of the LLM. It is the space where the Orchestration Layer aggregates the System Prompt, user query, conversation history, and the retrieved Grounding Data so the LLM can process them together to generate a response. |

| GN Video MCP Server | Provided by Gracenote, the server acts as a data enablement layer. It interfaces with underlying Gracenote APIs to retrieve, process, and package the Grounding Data in a format optimized for the LLM to consume. |

| Grounding Data | The factual, structured data provided by the MCP Server (via the MCP Client and MCP Host) after a tool call. This data is inserted into the LLM's Context Window, allowing the LLM to generate a truthful and relevant response (a grounded response). |

| LLM (Large Language Model) | The core machine learning model that acts as the central reasoning engine within the LLM Agent. It processes the text inputs supplied via the Context Window, determines the appropriate next action (including calling MCP Tools), and generates the final, human-readable response based on the Grounding Data it receives. |

| LLM Agent | Generally used to describe the entire AI system designed to achieve a specific goal. It is not just the client code; it's an architecture where the LLM is the reasoning component and the Agent is the orchestration component. |

| MCP (Model Context Protocol) | Connects LLMs with domain-specific information after a user query, providing verified and enriched contextually relevant responses. |

| MCP Client | A library or service within the MCP Host that handles the low-level communication with the MCP Server |

| MCP Host | The application or service that houses the Orchestration Layer and the MCP Client. It is the environment/client-side container where the LLM Agent's logic runs and initiates calls to the LLM and the MCP Server. |

| MCP Tools | Functions (or external capabilities) that the LLM Agent can call. These functions are implemented by the MCP Host and often leverage the MCP Client to fetch data from the MCP Server. The LLM decides which tool to call based on the user's request. |

| Orchestration Layer | The central part that orchestrates the reasoning/action loop of the LLM Agent, maintains the Context Window, provides the System Prompt and MCP Tool descriptions to the LLM, and interacts with the tools. This is the core of the agent, and it is created by a developer for a specific application. |

| System Prompt | A developer-defined template sent to the LLM by the Orchestration Layer that sets the persona, constraints, business rules, and can include the descriptions of the MCP Tools available to the LLM for function calling. |

| Training (Or Model Training) | LLMs are trained and fine-tuned by being fed an enormous amount of textual information. |

The bulk of the tasks comprising LLM Agent workflow is managed by the LLM and MCP Server. As a developer, you are typically responsible for the following components:

- Orchestration Layer: This includes creating the logic to execute the reasoning/action loop and maintain the context window. A number of orchestration frameworks exist, providing many orchestration functions and simplifying the agent implementation.

- MCP Host Application: This includes integrating MCP client(s) to communicate with MCP server(s) and providing the application UI.

- System Prompt: This includes defining the persona and rules for the LLM within the Host Application

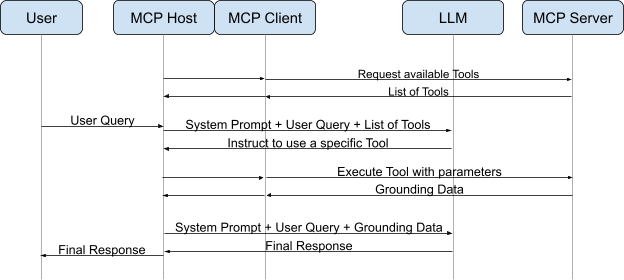

Simplified Reasoning/Action Loop

The following describes a reasoning/action loop iteration:

- User Query:

- The user enters a natural language query into the MCP Host application (UI) describing the information they want.

- Example: “What movie won the academy award for best picture in 2019?”

- LLM Intent Analysis & Tool Selection:

- The Host Application sends the user query (along with the System Prompt and available MCP Tool definitions) to the LLM.

- The LLM analyzes the intent and determines it needs external data.

- It returns a Tool Call (structured output requesting a specific function, e.g., get_award_winners).

- Routing to Client:

- The Host Application detects the Tool Call.

- It passes this request to the internal MCP Client.

- Request Transmission:

- The MCP Client formats the request using a predefined request schema and sends it to the Gracenote MCP Server.

- Tool Execution:

- The MCP Server validates the request and executes the specific Gracenote tool logic, querying the underlying metadata APIs.

- Grounding Data Return:

- The MCP Server receives the API response and packages the raw data into a standard object (text, image, or resource) adhering to a predefined response schema. This is the Grounding Data.

- Context Injection:

- The MCP Client receives the Grounding Data and passes it back to the Host Application.

- The Host appends this data to the conversation history (context window) as a Tool Result.

- Final Inference:

- The Host Application sends the updated conversation history (Query + Tool Call + Tool Result) back to the LLM.

- The LLM performs inference on this grounded context to generate a natural language answer.

- Display:

- The Host Application receives the final text response from the LLM and renders it to the user via the UI.